Last week, we learned how to interpret the output of the EvaluateAndLog function of scalar expressions. Albeit interesting, the new function would be much less useful if it could only return scalar values. After all, Power BI users can always define a scalar expression as a measure and then study its values in various contexts. The real power of EvaluateAndLog is that it can also wrap around any table expressions, the output of which was much harder to visualize until now.

One of the key takeaways from last week’s post is that a scalar expression produces many values in the presence of input columns. Each unique combination of values of input columns causes a reevaluation of the expression. The same is true for table expressions, which means the same table expression can generate multiple tables in a single query. Such dynamic behavior is what makes DAX powerful and hard to learn at the same time. With the help of the EvaluateAndLog function, we can now, for the first time, see all the intermediate tables instead of just imagining them in our head.

Let’s try a table-valued EvaluateAndLog with a simple measure:

Measure4 = CountRows(EvaluateAndLog(‘Sales’))

We inject EvaluateAndLog inside the CountRows function to see which rows are counted.

⚠️For this week’s examples, we will be building on top of last week’s 3-table model, the diagram of which is shown again in Figure 1 just like last week. That’s why our first example is already the 4th measure in the model. A link to the updated pbix file is provided at the end of this article.

⚠️ If you want to try the examples in this post on your own, make sure that you have installed the most recent update of the August 2022 release of Desktop which contains a critical bug fix. The version of your Desktop must be 2.108.997.0 or later.

Adding ‘Product'[Color] and [Measure4] to a table visual, we get Figure 2 and two DAXEvaluationLog events, Figures 3 and 4. There are eight tables in Figure 3, one for each product color, and only one table in Figure 4 corresponding to the total value of the table visual. Unlike scalar expressions, a table expression has an “outputs” property in its JSON document which is a list of columns that define the schema of the table.

It’s an interesting challenge how to present to the users in DAX Debug Output more than one table from the same table expression. Since all the tables share the same schema, I chose to show them in a single data grid just like the multiple values from a single scalar expression are displayed. This also happens to be the way such data is stored in the DAX Engine: rows from tables calculated from the same DAX expression are saved in a single data cache along with corresponding values of the input columns. To make it easier for users to differentiate between rows from different tables, I added to the data grid three extra columns, whose headers are in light and dark grayish blue colors:

- [Table Number] indicates to which table the row belongs;

- [Row Number] indicates which row in the table;

- [Row Count] indicates the total number of rows in the table.

Since data tables can potentially contain a lot of columns and rows in which case will be very expensive to serialize, the EvaluateAndLog function, by default, only serializes up to 10 rows per table into the JSON output, but it also serializes the total number of rows as the value of the “rowCount” JSON property to let users know if any rows are skipped. Users can use the optional <max rows> parameter of the function to change the limit. Since intermediate tables are not sorted by the DAX Engine, there is no general way of controlling which subset of rows will show up in the output. However, you can use functions like Filter, TopN, or Sample to select a subset of rows you want to see, e.g.

CountRows(EvaluateAndLog(Sample(10, ‘Sales’, ‘Sales'[ProductKey], Asc)))

But injecting Filter/TopN/Sample functions would change the result of the CountRows function, therefore is not a desirable workaround. Fortunately, there is a trick to have better control over the output rows without changing the result of the original expression, I’ll show how to do it next week.

In addition to the row count limit per table, DAX Engine also limits the maximum size of the JSON text. Once the JSON output becomes too big, DAX Engine will stop the serialization except to make sure that the result is a valid JSON document. Moreover, DAX Engine will insert a special JSON property to inform users of the truncation. For example, the following expression

CountRows(EvaluateAndLog(CrossJoin(‘Product’, ‘Customer’), “large output”, 10000))

would produce a large JSON string with the following property toward the end.

“notice”: “Data truncated due to event size limit.”

Currently, the threshold of the JSON size limit is set at 1,000,000 characters but it’s subject to change in the future. Large JSON strings are slow to parse and display in the data grid of DAX Debug Output. When that is the case during your debugging session, you can check the “Parse JSON on demand” option to switch from automatic parsing to on-demand parsing. If the hard limit disappoints you because you want to use DAX Engine’s JSON serialization ability to export large amount of calculated data, you should be warned that it’s not a primary function of Power BI therefore performance will become an issue when data volume is large, but there are a couple of extra easter eggs hidden in the product which may allow you to do so anyway when they become publicly available.

Let’s end the post with a real-life debugging example. Around the time when I was writing the first post, I was asked to help debug a measure that didn’t return the desired result. In order to show the expression using our demo model, I rewrote the problematic DAX expressions into the following two measures:

Uniq Products = DistinctCount(‘Sales'[ProductKey])

Measure5 =

Var _temp = Summarize(‘Sales’, ‘Sales'[CustomerKey], “Uniq Products”, [Uniq Products])

Var _currCustomer = SelectedValue(‘Sales'[CustomerKey])

Var _prevCustomer = TopN(1, Filter(_temp, ‘Sales'[CustomerKey] < _currCustomer), ‘Sales'[CustomerKey], Desc)

Return [Uniq Products] – MinX(_prevCustomer, [Uniq Products])

The expression for [Measure5] is complex and inefficient but the idea was to show the contrast between an old way and a new way (yet another easter egg hidden in the product) of calculating the difference of measure values between the current row and the previous row in a table visual.

[Measure5] works in four steps:

- Calculate a two-column table of customers and the corresponding numbers of unique products purchased by them.

- Find the current customer in the evaluation context.

- Find the previous customer from the intermediate table in Step 1 ordered by the ‘Sales'[CustomerKey] column in descending order.

- Calculate the difference between the numbers of unique products purchased by the current customer and by the previous customer.

But when adding both measures to a table visual, the delta values were clearly wrong, Figure 5. By the way, since the expression is O(N2), I had to apply a filter on the ‘Sales'[CustomerKey] column to limit the number of rows to 30 for the calculation to finish quickly.

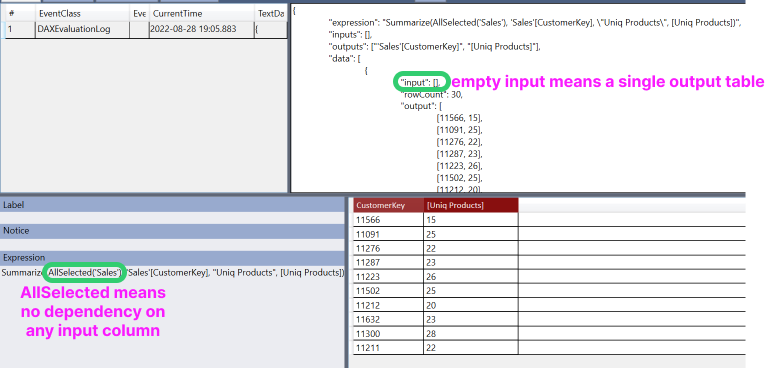

To find out what’s wrong with the expression, let’s wrap EvaluateAndLog around the Summarize function to see if we even get the first step right. We’d like to see a single table with all 30 customers and their product counts so we can navigate from any customer to the previous one. But the actual EvaluateAndLog output consists of 30 single-row tables, one table per customer. That’s because in DAX a simple table name by itself means a filtered table. Since ‘Sales'[CustomerKey] is in the filter context, the first parameter to Summarize, ‘Sales’, is filtered by the current [CustomerKey] value in the filter context which limits ‘Sales’ to only those transactions belonging to a single customer. Summarize would then group the filtered ‘Sales’ by [CustomerKey] to return a single row. This analysis can be easily verified by wrapping EvaluateAndLog directly around ‘Sales’ to see, Figure 8, that it only returns rows that match the values of the input column. The fix is to replace ‘Sales’ by AllSelected(‘Sales’) as the first parameter to the Summarize function, after which, we get the desired output, Figure 8, as well as the correct intermediate table of step 1, Figure 9.

Today, we studied by examples the table version of the EvaluateAndLog function. Key takeaways from today’s lesson are:

- Both scalar and table expressions are reevaluated for each unique combination of values from the input columns therefore can potentially generate multiple values or tables in a single query.

- EvaluateAndLog imposes a default limit of 10 rows per table but includes a “rowCount” property in the JSON document to inform users of the true size of the table. Since the table is not sorted, the 10 rows are randomly selected from user’s perspective.

- EvaluateAndLog imposes a limit of roughly 1,000,000 characters per JSON string but will keep the final output a valid JSON document.

- EvaluateAndLog inserts a special “notice” JSON property when its output is truncated due to the size limit.

External Links

Download the latest version of Power BI Desktop if you want to try out today’s examples.

The pbix file used in today’s examples can be downloaded from here.

The current version of DAX Debug Output is here. You must have admin rights on the machine and agree to install the software from an unknown publisher. You download both DAXDebugOutputSetup.msi and setup.exe to your machine and run setup.

Now, those hidden easter eggs …. 🙂

LikeLike